Comments

-

Interpretations of ProbabilityI read a few things on likelihoodism and other ideas of what is the 'right way' to show that data favours a hypothesis against a (set of) competing hypothesis. They could be summarised by the following:

Data supports hypothesis over iff some contrasting function of the posterior or likelihood given the data and the two hypothesis is greater than a specified value [0 for differences, 1 for ratios]. Contrasting functions could include ratios of posterior odds, ratios of posterior to prior odds, the raw likelihood ratios, for example.

This really doesn't generalise very well to statistical practice. I'm sure there are more. I'll start with non-Bayesian problems:

1) Using something like a random forest regression doesn't allow you to test hypotheses in these ways, and will not output a likelihood ratio or give you the ability to derive one.

2) Models are usually relationally compared with fit criteria such as the AIC, BIC or DIC. These involve the likelihood but are also functions of the number of model parameters.

3) Likelihoodism is silent on using post-diagnostics for model comparison.

4) You couldn't look at the significance of an overdispersion parameter in Poisson models since this changes the likelihood to a formally distinct function called a quasi-likelihood.

5) It is silent on the literature using penalized regression or loss functions more generally for model comparisons.

Bayesian problems:

1) Bayes factors (a popular ) from above) are riddled with practical problems, most people wanting to test the 'significance' of a hypothesis instead see if a test parameter value belongs to a 95% posterior quantile credible interval [the thing people want to do with confidence intervals but can't].

2) It completely elides the use of posterior predictive checks for calibrating prior distributions.

3) It completely elides the use of regularisation and shrinkage: something like the prior odds ratio of two half Cauchies or t-distribution on 2 degrees of freedom wouldn't mean much [representing no hypothesis other than the implicit 'the variance is unlikely to be large', which is the hypothesis in BOTH numerator and denominator of the prior ratio].

The most damning thing is really its complete incompatibility with posterior diagnostics or model fitting checks in choosing models. They're functions of the model independent of its specifications and are used for relational comparison of evidence. -

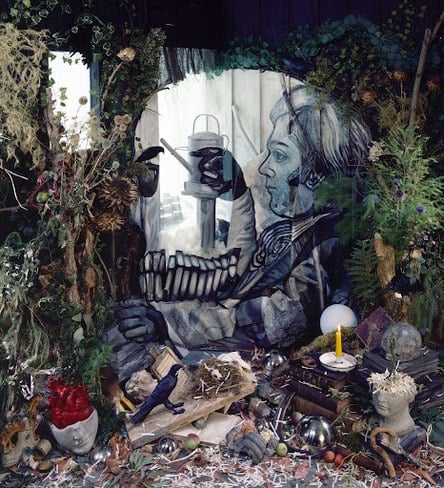

A Sketch of the Present

@'StreetlightX'

the categories that once used to exempt the body from it's circuits now begin to capture it: beyond the much mentioned 'commodification' of the body (in terms of say, stem cells, DNA sequences, and other, now 'patentable' biological 'innovations'), you also get - as again charted by Cooper - the militarization of biology, where the body itself becomes a site of security concern -

Beyond commodification and warfare, one can imagine other places in which categories once applicable to non-humans gradually shade into human considerations - I have in mind Agamben's other studies on the growing indistinction between animal and human, law and life, the sacred and the profane, etc.

So again, while it's true that this represents a culmination of long-term trends, what's changed is the specificity of that movement which has now increasingly encircled even the body, which at least at one point could be left out of it. In Marxist parlance, capital has set it's sights not only on the means of production, but on the means of (biological) reproduction as well. This change needs also to be tracked in tandem with the temporal shift in which capital, generalizing the debt form, now beings to place more and more importance on not just the mode of production, but on the speculative mode of prediction which underlies it's upheaval of temporal categories as well (cf. the work of Ivan Ascher on the 'portfolio society' which we now inhabit). But this last is a larger point that needs elaboration.

I read this and the developments in the thread a few times, there's something I'd quite like to highlight with regard to the generalisation of the debt form, in particular sketching a way how 'categories once applicable to non-humans gradually shade into human considerations' in this manner from a roughly Marxist perspective - or one way this may've happened at least.

Speculative ability has always been a feature of the expansion of accumulation, in particular businesses expand typically through a loan in their initial stages. Attaining such a thing is classically based on estimates of the profitability of the businesses' endeavours. In essence, this is taking labour and attaching an exchange value to it in the future. Implicit in this is the quantification and operationalisation (numerical representation) of the accumulation of capital itself. I think the way you usually denote this procedure is intensification. This description raises the question: how do we get from the intensification of a business' future to the intensification of the individual? This is an essentially historical question, but I would like to say that a reasonable amount of the blame can be stuck on health insurance.

Health insurance in its earliest form (in America) popped up during the Great Depression in America. [An aside: maybe this is a historical predecessor of precarity] This was typically to ensure that if an individual suffered an accident, then they would be compensated. If you attempt to do a profit maximisation on this, it encourages speculative activity from insurance and an evaluation of how likely and how soon an individual is to receive a payment from it. This would then not just be a quantification of an individual's current health, but on their future health prospects - conceiving of the individual as a risk profile to be hedged and managed. If an individual is deemed an acceptable risk (+expected money) then their money will be used in speculation to get more money. Thus the individual's body is intensified through their predicted value.

It would then be a question of how the intensification of the body spread to the level of ideology rather than as part of business practice. I imagine the growth of group awareness of experimental science, and the development of quantitative rather than qualitative methods in those fields (especially medicine) would play a role. -

Interpretations of Probability@'SophistiCat'

I've not met likelihoodism before, do you have any good references for me to read? Will respond more fully once I've got some more familiarity with this. -

Interpretations of Probability

It's probably true that for every Bayesian method of analysis there's a similar non-Bayesian one which deals with the same problem, or estimates the same model. I think the differences between interpretations of probability arise because pre-theoretic intuitions of probability are an amalgamation of several conflicting aspects. For example, judging a dice to be fair because its centre of mass is in the middle and that the sides are the same area vs judging a dice to be fair because of many rolls vs judging a dice to be fair because loaded dice are uncommon; each of these is an ascription of probability in distinct and non-compatible ways. In order, objective properties of the system: 'propensity' in the language of the SEP article; because the observed frequency of sides is consistent with fairness, 'long term frequency' in the SEP article; and an intuitive judgement about the uncommonness of loaded dice, 'subjective probability' in the SEP article.

An elision from the article is that the principle of indifference; part claim that 'equally possibles are equally probables' and part claim that randomness is derived from a priori equipotentiality actually place constraints on what probability measures give rise to random variables consistent with a profound lack of knowledge of their typical values. In what sense? If the principle of indifference is used to represent lack of knowledge in arbitrary probability spaces, we could only use a probability measure proportional to the Lebesgue measure in those spaces (or counting measure for discrete spaces). IE, we can only use the uniform distribution. This paper gives a detailed treatment of the problems with this entailment.

I think it's worth noting that pre-theoretic ideas of probability don't neatly correspond to a single concept, whereas all authors in the SEP article having positions on these subjects agree on what random variables an probability measures are (up to disagreements in axiomatisation). It is a bizarre situation in which everyone agrees on the mathematics of random variables and probability to a large degree, but there is much disagreement on what the ascription of probability to these objects means [despite being a part of the mathematical treatment]. I believe this is a result of the pre-theoretic notion of probability that we have being internally conflicted - bringing various non equivalent regimes of ideas together as I detailed above with the examples.

Another point in criticism of the article is that one of the first contributors to Bayesian treatments of probability, Jeffreys, advocates the use of non-probability distributions as prior distributions in Bayesian analysis [for variance parameters] - ones which cannot represent the degree of belief of a subject and do not obey the constraints of a probability calculus. The philosophical impact of the practice of statistics no longer depending in some sense of dealing with exact distributions and likelihoods, to my knowledge, is small to nonexistent. I believe an encounter between contemporary statistics research - especially in the fields of prior specification and penalised regression - would definitely be valuable and perturbing to interpretations of probability.

Lastly, it makes no mention of the research done in psychology about how subjects actually make probability and quantitative judgements - it is very clear that preferences stated about the outcomes of quantitative phenomena are not attained through the use of some utility calculus and not even through Bayes theorem. If there is a desire to find out how humans do think about probability and form beliefs in the presence of uncertainty rather than how we should in an idealised betting room, this would be a valuable encounter too. -

Chance: Is It Real?I'm not slick at all. The game I'm playing is 'What can I do to understand whatever disagreement I have with Jeremiah?', now that 'Asking Jeramiah to tell me how he disagrees with me' is out of the question, I have absolutely no clue of how to proceed. Your move.

-

Chance: Is It Real?You claimed that I was misunderstanding your points and that all my comments are off the mark. I gave you an opportunity to set me straight.

No, null distributions are not supplied by God [despite how hypothesis testing is often treated in the applied sciences], they are a combination of distributional assumptions that usually allow the derivation of the test statistic and of specific values of that distribution referred to in the hypothesis. I have no idea this relates to what you're talking about. So I'll ask again.

What actually is your question, and what do you think we disagree on? What is the distinction between choosing and making that you refer to, and how is it relevant to the discussion? Now, how is the null distribution related to the discussion? -

Chance: Is It Real?I'm really not. What actually is your question, and what do you think we disagree on? What is the distinction between choosing and making that you refer to, and how is it relevant to the discussion?

-

Chance: Is It Real?↪fdrake Tell me, how do you plan on making your probability distribution without data?

T_T -

Chance: Is It Real?With regard to regularization and shrinkage, these are reasons to choose a distribution not because of the way it represents the probability of events, but because the means of their representation induce properties in models. IE, they are probability distributions whose interpretation isn't done in terms of the probability of certain events, their interpretation is 'this way of portraying the probability of certain events induces certain nice properties in my model'.

-

Chance: Is It Real?The long post I made details a philosophical distinction, between frequentist and Bayesian interpretations of probability. I presented the distinction between them. To summarize: the meaning of probability doesn't have to depend on long run frequency. This was our central disagreement. I provided an interpretation of probability which is accepted in the literature showing exactly that.

If you read the other thread, you would also see I made a comment saying that the differences in probability interpretation occur roughly on the level of parameter estimation and the interpretation of parameters - the mathematical definition of random variables and probability measures has absolutely nothing to say about whether probability 'is really frequentist' or 'is really Bayesian'. I gave you an argument and references to show that the definition of random variables and probability doesn't depend on the fundamental notions you said that it did.

Furthermore, the reason I posted the technical things that I did was to give you some idea of the contemporary research on the topics and the independence of fundamental statistical concepts from philosophical interpretations of probability. If you were not a statistics student I would have responded completely differently [in the intuitive manner you called me to task for].

This is relevant because most topics in the philosophy of statistics have been rendered out-dated and out of touch with contemporary methods. Choice of prior distribution for a statistical model doesn't have to be a distribution (look at Jeffrey's prior) IE it doesn't even have to be a probability measure. Statistical models don't have to result in a proper distribution without constraints (look at Besag models and other 'intrinsic' ones), don't have to depend solely on the likelihood [look at penalized regression, like the LASSO] in frequentist inference. What does it even mean to say 'statistics is about probability and sequences of random events' when contemporary topics don't even NEED a specific parametric model (look at splines in generalized additive models) or even necessarily to output a distribution? How can we think a 'book of preferences' for an agent as classical probability/utility arguments go for founding expert choice distributions when in practice statistical analysis allows the choice of non-distributions or distributions without expectation or variance as representative of individuals' preferences about centrality and variability?

You then asked how to choose a probability distribution without using data in some way. I responded by saying various ways people do this in practice in the only context it occurs - choosing a prior. Of course the statistical model depends on the data, that's how you estimate its parameters.

I have absolutely no interest in rehearsing a dead argument about which interpretation of probability is correct when it has little relevance to the contemporary structure of statistics. I evinced this by giving you a few examples of Bayesian methods being used to analyse frequentist problems [implicit priors] and frequentist asymptotics being used to analyse Bayesian methods. -

Chance: Is It Real?There are lots of motivations for choosing prior distributions. Broadly speaking they can be chosen in two ways: through expert information and previous studies, or to induce a desirable property in the inference. In the first sense, you can take a posterior distribution or distribution from a frequentist paper through moment matching from previous studies, or alternatively you can elicit quantiles from experts. In the second sense, there are many possible reasons for choosing a prior distribution.

Traditionally, priors were chosen to be 'conjugate' to their likelihoods because that made analytic computation for posterior distributions possible. In the spirit of 'equally possible things are equally probable', there are families of uninformative prior distributions which are alleged to express the lack of knowledge about parameter values in the likelihood. As examples, you can look at entropy maximizing priors, Jeffrey's prior or uniform priors with large support. Or alternatively on asymptotic frequentist principles, and for this you can look at reference priors.

Motivated from the study of random effect models, there is often need to make the inference more conservative than a model component with an uninformative prior typically allows. If for example you have a random effect model for a single factor (with <5 levels), posterior estimates of the variance and precision of the random effect will be unstable [in the sense of huge variance]. This issue has been approached in numerous ways and depends on the problem type at hand. For example, in spatial statistics when estimating the correlation function of the Matern field (a spatial random effect) in addition to other effects, correlation parameter can be shrunk towards 1. This can be achieved through defining a prior on the scale of an information theoretic difference (like the Kullback-Liebler divergence or Hellinger distance). More recently, a family of prior distributions called hypergeometric inverted beta distributions has been proposed for 'top level' variance parameters in random effect models, with the celebrated Half-Cauchy prior on the standard deviation being a popular choice for regularization. -

Chance: Is It Real?If copy-pasting the response in the other thread to you is what it takes to get you to stop being petulant, here it is:

This is a response to @Jeremiah from the 'Chance, Is It Real?' thread.

Pre-amble: I'm going to assume that someone reading it knows roughly what an 'asymptotic argument' is in statistics. I will also gloss over the technical specifics of estimating things in Bayesian statistics, instead trying to suggest their general properties in an intuitive manner. However, it is impossible to discuss the distinction between Bayesian and frequentist inference, so it is unlikely that someone without a basic knowledge of statistics will understand this post fully.

Reveal rough definition of asymptotic argument

In contemporary statistics, there are two dominant interpretations of probability.

1) That probability is always proportional to the long-term frequency of a specified event.

2) That probability is the quantification of uncertainty about the value of a parameter in a statistical model.

(1) is usually called the 'frequentist interpretation of probability', (2) is usually called the 'Bayesian interpretation of probability', though there are others. Each of this philosophical positions has numerous consequences for how data is analysed. I will begin with a brief history of the two viewpoints.

The frequentist idea of probability can trace its origin to Ronald Fisher, who gained his reputation in part through analysis of genetics in terms of probability - being a founding father of modern population genetics, and in part through the design and analysis of comparative experiments - developing the analysis of variance (ANOVA) method for their analysis. I will focus on the developments resulting from the latter, eliding technical detail. Bayesian statistics is named after Thomas Bayes, the discoverer of Bayes Theorem', which arose in analysing games of chance. More technical details are provided later in the post. Suffice to say now that Bayes Theorem is the driving force behind Bayesian statistics, and this has a quantity in it called the prior distribution - whose interpretation is incompatible with frequentist statistics.

The ANOVA is an incredibly commonplace method of analysis in applications today. It allows experimenters to ask questions relating to the variation of a quantitive observations over a set of categorical experimental conditions.

For example, in agricultural field experiments 'Which of these fertilisers is the best?'

The application of fertilisers is termed a 'treatment factor', say there are 2 fertilisers called 'Melba' and 'Croppa', then the 'treatment factor' has two levels (values it can take), 'Melba' and 'Croppa'. Assume we have one field treated with Melba, and one with Croppa. Each field is divided into (say) 10 units, and after the crops are fully grown, the total mass of vegetation in each unit will be recorded. An ANOVA allows us to (try to) answer the question 'Is Croppa better than Melba?. This is done by assessing the mean of the vegetation mass for each field and comparing these with the observed variation in the masses. Roughly: if the difference in masses for Croppa and Melba (Croppa-Melba) is large compared to how variable the masses are, we can say there is evidence that Croppa is better than Melba.* How?

This is done by means of a hypothesis test. At this point we depart from Fisher's original formulation and move to the more modern developments by Neyman and Pearson (which is now the industry standard). A hypothesis test is a procedure to take a statistic like 'the difference between Croppa and Melba' and assign a probability to it. This probability is obtained by assuming a base experimental condition, called 'the null hypothesis', several 'modelling assumptions' and an asymptotic argument .

In the case of this ANOVA, these are roughly:

A) Modelling assumptions: variation between treatments only manifests as variations in means, any measurement imprecision is distributed Normally (a bell curve).

B) Null hypothesis: There is no difference in mean yields between Croppa and Melba

C) Asymptotic argument: assume that B is true, then what is the probability of observing the difference in yields in the experiment assuming we have an infinitely large sample or infinitely many repeated samples? We can find this through the use of the Normal distribution (or more specifically for ANOVAS, a derived F distribution, but this specificity doesn't matter).

The combination of B and C is called a hypothesis test.

The frequentist interpretation of probability is used in C. This is because a probability is assigned to the observed difference by calculating on the basis of 'what if we had an infinite sample size or infinitely many repeated experiments of the same sort?' and the derived distribution for the problem (what defines the randomness in the model).

An alternative method of analysis, in a Bayesian analysis would allow the same modelling assumptions (A), but would base its conclusions on the following method:

A) the same as before

B) Define what is called a prior distribution on the error variance.

C) Fit the model using Bayes Theorem.

D) Calculate the odds ratio of the statement 'Croppa is better than Melba' to 'Croppa is worse than or equal to Melba' using the derived model.

I will elide the specifics of fitting a model using Bayes Theorem. Instead I will provide a rough sketch of a general procedure for doing so below. It is more technical, but still only a sketch to provide an approximate idea.

Bayes theorem says that for two events A and B and a probability evaluation P:

P(A|B) = P(B|A)P(A) / P(B)

where P(A|B) is the probability that A happens given that B has already happened, the conditional probability of A given B. If we also allow P(B|A) to depend on the data X, we can obtain P(A|B,X), which is called the posterior distribution of A.

For our model, we would have P(B|A) be the likelihood as obtained in frequentist statistics (modelling assumptions), in this case a normal likelihood given the parameter A = the noise variance of the difference between the two quantities. And P(A) is a distribution the analyst specifies without reference to the specific values obtained in the data, supposed to quantify the a priori uncertainty about the noise variance of the difference between Croppa and Melba. P(B) is simply a normalising constant to ensure that P(A|B) is indeed a probability distribution.

Bayesian inference instead replaces the assumptions B and C with something called the prior distribution and likelihood, Bayes Theorem and a likelihood ratio test. The prior distribution for the ANOVA is a guesstimate of how variable the measurements are without looking at the data (again, approximate idea, there is a huge literature on this). This guess is a probability distribution over all the values that are sensible for the measurement variability. This whole distribution is called the prior distribution for the measurement variability. It is then combined with the modelling assumptions to produce a distribution called the 'posterior distribution', which plays the same role in inference as modelling assumptions and the null hypothesis in the frequentist analysis. This is because posterior distribution then allows you to produce estimates of how likely the hypothesis 'Croppa is better than Melba' is compared to 'Croppa is worse than or equal to Melba', that is called an odds ratio.

The take home message is that in a frequentist hypothesis test - we are trying to infer upon the unknown fixed value of a population parameter (the difference between Croppa and Melba means), in Bayesian inference we are trying to infer on the posterior distribution of the parameters of interest (the difference between Croppa and Melba mean weights and the measurement variability). Furthermore, the assignment of an odds ratio in Bayesian statistics does not have to depend on an asymptotic argument relating the null hypothesis and alternative hypothesis to the modelling assumptions. Also, it is impossible to specify a prior distribution through frequentist means (it does not represent the long run frequency of any event, nor an observation of it).

Without arguing which is better, this should hopefully clear up (to some degree) my disagreement with @Jeremiah and perhaps provide something interesting to think about for the mathematically inclined. -

Chance: Is It Real?Is it really that difficult to disentangle these two ideas:

1) Statistics deals with random events.

2) Probability's interpretation.

? -

Chance: Is It Real?

Markov Chain Monte Carlo uses a Bayesian interpretation of probability. The methods vary, but they all compute Bayesian quantities (such as 'full conditionals' for a Gibbs Sampler). If you've not read the thread I made in response to you, please do so. You're operating from a position of non-familiarity with a big sub-discipline of statistics. -

Interpretations of ProbabilityIf you're a strict Bayesian the vast majority of applied research is bogus (since it uses hypothesis tests). If you're a strict frequentist you don't have the conceptual machinery to deal with lots of problematic data types, and can't use things like Google's tailored search algorithm or Amazon's recommendations.

In practice people generally think the use of both is acceptable. -

Interpretations of ProbabilityOne last thing I forgot: you can see that there was no mention of the measure theoretic definition of probability in the above posts. This is because the disagreement between frequentist and Bayesian interpretations of probability is fundamentally one of parameter estimation. You need the whole machinery of measure theoretic probability to get rigorously to this part of statistical analysis, so the bifurcation between the interpretations occurs after it.

-

Chance: Is It Real?Honestly, direct mockery and non-collaboration from the frequentist paradigm of statistics was something that troubled the field up until (and after) the production of Markov Chain Monte Carlo - though Bayesians definitely shot back. After the discovery of Markov Chain Monte Carlo, Bayesian methods had to be taught as a respectable interpretation of probability because of the sheer pragmatic power of Bayesian methods. If you sum up the founding papers of MCMC's citations, you get somewhere in the region of 40k citations. Nowadays you can find a whole host of post Bayesian/frequentist papers that, for example, translate frequentist risk results in terms of implicit prior distributions and look at frequentist/large sample properties of Bayesian estimators.

-

Interpretations of ProbabilitySummary:

Frequentist - fixed population parameters, hypothesis tests, asymptotic arguments.

Bayesian - random population parameters, likelihood ratio tests, possible non-reliance on asymptotic arguments.

Major disagreement: interpretation of the prior probability. -

Chance: Is It Real?The notion that it is 'repeated' is absent from the measure theoretic definition. The 'long-run frequency' interpretation of probability is actually contested.

-

Chance: Is It Real?

The reason I used intuitive rather than mathematical description was because nobody knows what sigma algebras and measureable functions are. -

Chance: Is It Real?

Ok. This is a random variable:

Let (O,S,P) be a probability space where O is a set of outcomes and S a sigma algebra on the set of outcomes, then a random variable X is defined as a measureable function on (O,S,P) to some set of values R. A measurable function is a function X such that the pre-image of every measureable set is measureable. Typically, we have:

O be (some subset of) the real numbers, S be the Borel sigma algebra on O and R being the real numbers. Measureability here is defined with respect to the Lebesgue measure on measure space (O,S) and (R,B(R)) where B(R) is the set of Borel sets on R. In this case we have a real probability space and a real valued random variable X.

A probability measure is a measure P such that P(O)=1. It inherits the familiar properties of probability distributions from introductory courses by saying that it is a measure. The missing properties are entailed by something called sigma-additivity, which is that if you take a countable sequence of sets in S C_n, then P(Union C_n over n) = Sum(P(C_n) over n).

If you would like a quick reference to these ideas: see this. Chapter 3 contains the definition of measures and measureable spaces (also including discrete random variables which I haven't here). Chapter 8 itself studies probability measures. -

Chance: Is It Real?

I did not intend you to feel intimidated or patronised, so please try to be less aggressive.

Also, if you're going to give a reference, then give a direct reference, and not a "it is somewhere in that general direction." Statistics is the second degree I am working on; writing was my first, and I know that that is a poor citation.

My posts aren't intended to be parts of academic papers, that would be boring. But if it helps, here is a description of some of the references I gave:

The first two links in 'here' and 'are' have the definition within the first two pages. The last is literally a whole course on measure theoretic probability, the definition and intuitive explanations thereof is contained in the section labelled 'Random Variables'. It goes from the intuitive notions you will have already met and the formalistic notions you will see if you take a course in measure theoretic probability towards the end.

Yes, a non measure-theoretic definition of probability is what is used in introductory stats courses and wherever the measure theoretic properties are irrelevant. Probability being 'the frequency of possible outcomes from repeated random events' is the frequentist intuition of probability. It is arguably incompatible with the use of Bayes' Theorem to fit models because of 1) the existence of a prior distribution and 2) the interpretation of population parameters as random variables.

Measure-theoretic probability governs the elementary things in both of these approaches - random variables and probability distributions - and so clearly implies neither. -

Chance: Is It Real?It's a summary of the mathematical definition of random variables and probability in terms of measure theory. Here are a few references.

When mathematicians and statisticians speak about probabilities, they are secretly speaking about these. The definitions are consistent with both frequentist (frequency, asymptotic frequency) and Bayesian (subjective probability) philosophical interpretations. Also more general notions of probability where probability distributions can represent neither. Such in Bayesian shrinkage and frequentist regularization approaches. -

Chance: Is It Real?Mathematically probability doesn't have to resemble either 'the long term frequency of events' or 'a representation of a subjective degree of belief and evidence for a proposition'. Its definition is compatible with both of these things.

To understand what it's doing, we need to look at what a random variable is. A random variable is a mapping from a collection of possible events and rules for combining them (called a sigma algebra) to a set of values it may take. More formally, a random variable is a measureable mapping from a probability space to a set of values it can take. Intuitively, this means that any particular event that could happen for this random variable takes up a definite size in the set of all possible events. It is said that a random variable X satisfies a probability measure P if the associated size of an event (E) which induces a set of values from the random variable has probability P(E makes X take the set of values).

There's nothing in here specifically about 'frequency of possible outcomes', since for continuous* probability spaces the probability of any specific outcome is 0.

Fundamentally, all probability is an evaluation of the size of a set with respect to the size of other sets in the same space.

*specifically non-atomic probability measures -

Do emotions influence my decision making

Emotions being required for decision making has already been covered, but your first sentence in the post:

Am i right in saying that decisions are made by the brains calculations when required to make a decision, analysing all the factors of risk, outcome, requirements to perform it etc etc. — Andrew

can be addressed further. It's quite well understood that humans don't make the vast majority of their decisions through an implicit, objective cost benefit analysis. Ratiocination is lazy and usually substitutes a hard problem for intuitive easy problems that don't always produce the correct answer. Ratiocination is also heavily influenced by priming effects, such as there being studies showing that when subjects are exposed to revolting images then given a quick political affiliation test, they become more conservative than subjects not given the revolting images.

Even in scenarios where we expect experienced and disciplined thinkers to give judgements, humans usually fall foul to all kinds of contextual effects. For example, there's a famous data set of court proceedings in a Jerusalem court [can't remember what it was for], giving whether the defendant was convicted or not and the time of their court case. Conviction rates were higher when the judges hadn't had their coffee or towards the end of the day when they were tired.

This kind of thing also occurs for decisions we are not conscious of making. There's a study which asked subjects to fill out various opinions on the elderly, then move to a different office to complete a largely irrelevant batch of questions. Subjects were shown where both offices were before taking the first test. The control group was instead asked to answer questions about something largely irrelevant, then to go to the other office after completion. The interesting result is that the subjects were timed from leaving the first office and entering the second - those people who were asked to think about being elderly moved a lot slower than those who didn't.

If you would like to see more of this kind of research, the book 'Thinking Fast and Slow' by Daniel Kahnneman has an excellent battery of references, and its seminal paper 'Judgement Under Uncertainty: Heuristics and Biases' has a legal free download if you Google it. -

A Sketch of the Present

Part of what's at stake in my post is the attempt to move away from 'psycologizing' explanations: things like saying 'ah, if only people would change their attitudes, think differently, engage with the world in a more productive way', etc. — SLX

I didn't mean to suggest that this surface level observation of a current cultural malaise sufficed for an analysis. You're right in suggesting that there are causal or mechanism of enabling questions for any cultural observation. The purpose I had in mind in mentioning the degradation of mythopoesis on the societal and individual level was to provide a category or summary of the phenomenon - a generalisation on the same level of abstraction (so to speak) as what was generalised. This was purely descriptive, the purpose being to enable questions such as: 'What historico-economic conditions have enabled the widespread degradation of mythopoetic structures?': and also locate a temporalising element (denying futurity) which is fundamental to this degradation. Methodologically, this was meant to instantiate these questions in terms of their effect in a generalised human; the kind of human that reacts in the predictable way to zero hours contracts, wages lagging inflation, the destruction of career choices and the widespread privatisation of common goods.

I'm an old school historical materialist: look at the conditions - the political economy and beyond - which give rise to such attitudes, and direct change at that level. The modern obsession with self-help, motivational and inspirational books and speakers and so on are basically signs of resignation, another emblem of depoliticization which aims to change individual to fit structure, rather than structure to fit individual, as it were. — SLX

While I think it's true that advocating a solution in terms of re-training individuals to have mythopoetic structures is a band-aid on a gangrenous limb, I think you've elided the question: what would motivate such a depoliticised and fragmented individual to collectively organise with other depoliticised and fragmented individuals? Especially when a thorough analysis of economic power structures (such as the enabling conditions for the TTIP) renders action on the level of the country too small to effect change in any predictable manner? Perhaps this is unfair, a deeper and more appropriate question would be: what are the enabling conditions for collective organisation to address the historico-political structures that are screwing us over? -

A Sketch of the Present

There are a lot of things to respond to in your post, but I can give you two immediate thoughts that I had.

The ultimate effect of all this is a massive disorientation of what I might call existential intelligibility. Permanently poised to fall off the wagon - socially, economically, biologically - the categories we use to make sense of things are themselves permanently rendered precarious and thus not very useful. At any point, we are prone to the threat of catastrophe (even if it doesn't eventuate), along all the various axes outlined above, and their convergence into an locus of indistinction. This indistinciton, this loss of existential intelligibility, functions as a licence for the worst possible atrocities and our inability to deal with them. Hence the devastation wrought upon the poor, migrants, the environment, the climate, and more. — SLX

The disorientation you describe I think is present, but not 'new', Rick Roderick noticed in the 1990's:

How [does a way of life] break down? Well, here there is an analogy – for me – between the social and the self under siege, in many ways. In many ways, not in a few, and some of the symptoms we see around us that our own lives are breaking down and the lives of our society is a generalised cynicism and scepticism about everything. I don’t know how to characterise this situation, I find no parallel to it in human history. The scepticism and cynicism about everything is so general, and I think it’s partly due to this thing I call banalisation, and it’s partly due to the refusal and the fear of dealing with complexity. Much easier to be a cynic than to deal with complexity. Better to say everything is bullshit than to try to look into enough things to know where you are. Better to say everything is just… silly, or pointless, than to try to look into systems of this kind of complexity and into situations of the kind of complexity and ambiguity that we have to deal with now. — Rick Roderick, Self Under Seige

And on banalisation:

Anyway, that’s rationalisation, and then the third – and this is sort of one of my own if you will forgive me – is what I’ll call banalization. And it’s always a danger when you do lectures like the ones I am doing now, and that’s to take these fundamentally important things like what does my life mean, and surely there must be a better way to organise the world than the way it is organised now, surely my life could have more meaning in a different situation. Maybe my life’s meaning might be to change it or whatever, but to take any one of these criticisms and treat them as banalities. This is the great – to me – ideological function of television and the movies. However extreme the situation, TV can find a way to turn it into a banality. — Rick Roderick, Self Under Seige

Blaming TV is a bit out dated. We should probably blame the internet in its place at this point. Regardless, the thrust of the comments implicate a generalised breakdown of people's engagement with life-planning or 'bigger than ourselves' ideals. The collapse of this mythopoetic structure can probably partly explain the rise of public intellectual Jordan Peterson, whose main goal is to 'shove the meaning back into life' at the level of myths (even if he blames postmodernism, whatever that is, for the collapse of these mythopoetic structures rather than diagnosing in the wake of their collapse).

Perhaps we should tolerate a thematic reliance on the death of God, in a generalised form. The generalisation would be that not only is the divine no longer an organising principle of life, neither is an individual's commitment to their own goals and self narrative (the smallest form of a mythopoetic structure). Perhaps we are dead and we have killed us.

Though, I don't think prescribing any agency to people at large for the death of our mythopoetic structures is particularly appropriate, given the [in my view commonplace] self reports of reducing control over our day to day lives [precarity]. I believe precarity and the collapse of our mythopoetic structures are two sides of the same coin. The observed incredible complexity and ever-present tragedy, the 9-5 sword of Damocles, we are embroiled in leaves us only one response. Believe nothing, hope for nothing, nothing is right.

This ravaging of our goal orientation temporalises humans in strange way - we no longer have hopes for the future on the large scale. Occupy Wall Street was an excellent example of this, a movement without long term plans, a quiet 'No' to whatever annoyed them, and a [predictably] unceremonious dissipation. This was summarized in 2012 by Marxist blogger Ross Wolfe:

Gone are the days when humanity dreamt of a different tomorrow. All that remains of that hope is a distant memory. Indeed, most of what is hoped for these days is no more than some slightly modified version of the present, if not simply the return to a status quo ante — i.e., to a present that only recently became deceased. This is the utopia of normality, evinced by the drive to “get everything running back to normal” (back to the prosperity of the Clinton years, etc.). In this heroically banal vision of the world, all the upheaval and instability of the last few years must necessarily appear as just a fluke or bizarre aberration. A minor hiccup, that’s all. Once society gets itself back on track, the argument goes, it’ll be safe to resume the usual routine. — Ross Wolfe, The Charnel House, Memories of the Future

It's a small leap to relate this to the state of exception, which can perhaps be identified as a generating mechanism of precarity. -

Chance: Is It Real?

What other interpretations can there be for your statement:

It is possible that the cause of inaccuracies may be the evolution of the universe itself.

? -

Chance: Is It Real?

Claiming that the laws are changing also requires evidence. To be consistent with current physics, all changes must be within experimental error for all experiments (otherwise the change would be noted). The idea the laws change with time thus has no support since all observations which could've supported it are also within a constant laws of nature solution by construction. You would also end up with some crazy things and 'unchanging laws governing time evolution'.

A photon has energy equal to h*f, where h is plank's constant and f is its frequency. Assume that this law holds before some time t, and that after t instead we have a new constant i. This induces a discontinuous jump. This could also be modelled as an 'unchanging law of nature' by instead having the energy be equal to h(x), where h is a function of time x, such that h(x<t)=h and h(x>=t)=i. If it changed continuously you would also be able to have an 'unchanging physical law' by specifying a continuous functional form for h, and also estimate it precisely in experiments by measuring photon energy and dividing by the frequency - the law as it is now just makes this a straight line graph, it would instead have h(t) as the graph as an 'unchanging physical law'.

In order to not be in a scenario equivalent to one with unchanging laws, you would require the changes in natural law to behave in a completely patternless manner, essentially adding a huge variance noise term to every single physical law. This is already falsified since, say, plank's constant can be measured very precisely!

fdrake

Start FollowingSend a Message

- Other sites we like

- Social media

- Terms of Service

- Sign In

- Created with PlushForums

- © 2026 The Philosophy Forum